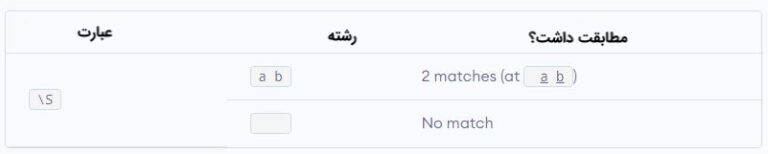

There are other libraries such as Keras, Spacy etc which also supports stop words corpus definition by default. Using NLTK library from rpus import stopwords Let’s discuss how to handle stop words in NLP problems. However, sometimes words like Yes/No plays a role when dealing with problems such as sentiment analysis or reviews. There are close to 800+ stop words in the English dictionary. The reason we don’t consider the usage of stop words in our dataset because it increases the training time as well doesn’t add any unique value. Words like a, an, but, we, I, do etc are known as stop words. Line 6: Remove any numerical values present in the dataset Line 5: Remove Punctuations special characters such as #, $, % Line 4: Remove special html characters such as website link, http/https/www Python has an inbuilt regex library which comes with any python version. This way you need don’t have to import any additional libraries. The best and fastest way to clean data in python is the regex method. Data Preprocessing/Data Cleaning using Python:

Train_df.describe(): Summary of statistical terms such as mean, standard deviation, distribution of data excluding NaN values. Train_df.info(): It returns the information about the dataframe including data type of rows and columns, non-null values and memory usage. Train_df.shape(): It gives the shape of the entire dataframe (7920 rows and 3 columns) As you would have noticed in the above output, special characters like ^^, #, :), is not useful to predict the sentiment of the reviews. The dependent variable is ‘label’ column which gives tweet sentiment as 0 (Positive) and 1 (Negative). When dealing with the text analysis process, the preprocessing step should be done for the column ‘tweet’ because we are concerned only about tweets. The dataframe has 3 columns id, label and tweet. We can use matplotlib and seaborn for better data analysis using visualization methods. Import the python libraries such as pandas to store the data into the dataframe. The ideal way to start with any machine learning problem is first to understand the data, clean the data then apply algorithms to achieve better accuracy. In the below example you will be learning about Sentiment Analysis using Python. Text can contain words such as punctuations, stop words, special characters or symbols which makes it harder to work with data.

In this tutorial, you will learn how to clean the text data using Python to make some meaning out of it. I hope you find this article useful.Data Cleaning Techniques For NLP related Problemsĭata Preprocessing is an important concept in any machine learning problem, especially when dealing with text-based statements in Natural Language Processing (NLP). After all, we always need to keep experimenting to improve. However, it also always comes down to your data and your use cases, so you can always try different approaches. I have learned that the processes I use above sometimes already give decent results, and sacrificing running time to perform lemmatization and stemming doesn’t always lead to better outcome. There are other processes worth mentioning such as lemmatization or stemming that I didn’t explain here, but they may require higher computing powers that can slow down your computer. Punctuations removal (including filtering non-alphanumeric characters if necessary).This whole article may seem long and complicated, but I assure you I can summarize all the steps above to the following basic processes. ‘dont miss our ama with author climber mark synnott who will be answering your questions about his historic journey north face everest today at 12 00pm et start submitting your questions here’] ‘coral shallows aitutaki lagoon cook islands polynesia’, [‘get ready join us 4 21 evening music celebration exploration inspiration’,

0 Comments

Leave a Reply. |

RSS Feed

RSS Feed